How I Learned to Stop Worrying and Love AI

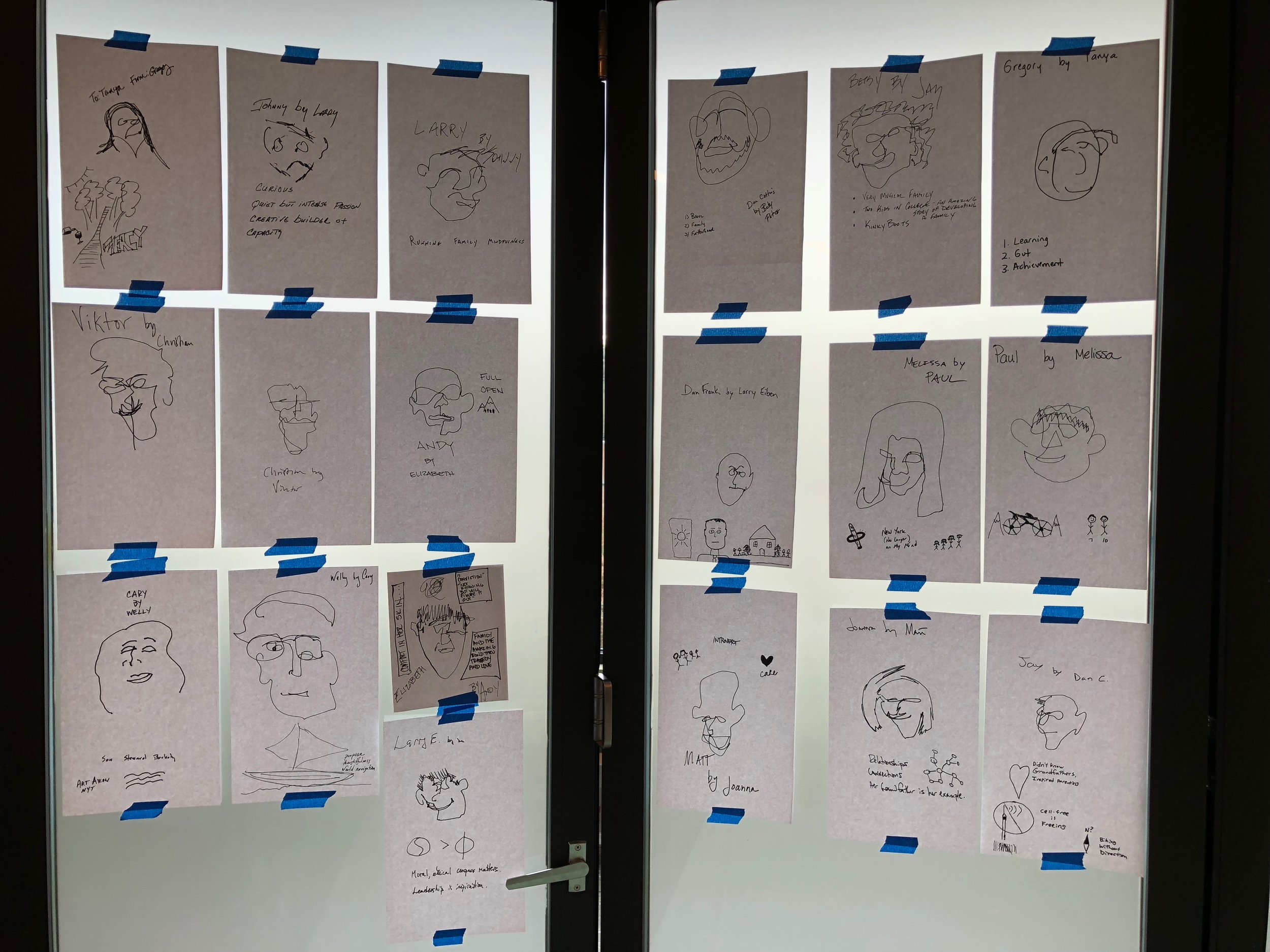

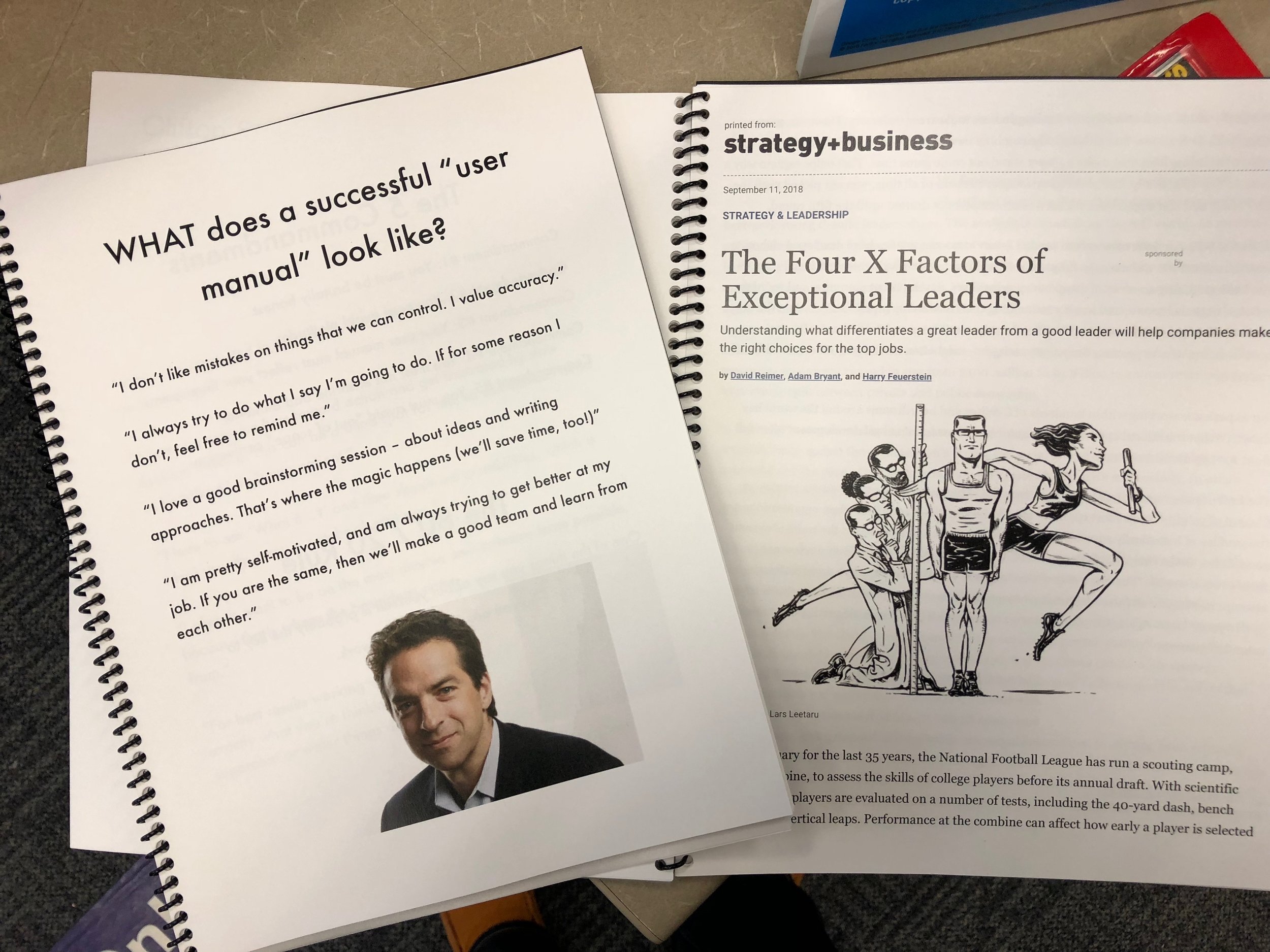

How might leaders use technology to increase or restore human agency? Participants at Signals x Richmond brainstorm a new narrative around the future of leadership and learning. Signals workshops convene leaders whose diverse forms of thinking are harmonized by Basecamp’s values: Questions > Answers and We > Me.

What does the rise of artificial intelligence (AI) mean for schools and learning?

At Signals x Richmond, hosted by The Steward School, Si McAleer from IBM pointed out that predictions about AI tend to devolve into either magical thinking (“It will cure cancer!”) or apocalyptic visions (“It will destroy humankind!”).

The Basecamp team has a different point of view. Informed by ideas from Wired co-founder Kevin Kelly, we suspect that AI will evolve into an array of diverse intelligences that won’t replace humans, but rather complement us:

“The whole point of AI is that it doesn’t think like us. Evolution has taken biological life only so far in making different kinds of minds. We’re going to use technology to extend and fill the space of possible ways of thinking.”

If AI thinks differently than we think, shouldn’t we design opportunities for learners to:

learn how they think best

recognize the diverse forms of intelligence (cognitive, social, emotional) in others

collaborate in diverse teams

As AIs become sufficiently advanced, learners can add them to their teams—not as tools, but as teammates.

After all, as Morgan Housel has put it, there are “different kinds of smart”:

“ ‘Smart’ is the ability to solve problems. Solving problems is the ability to get stuff done. And getting stuff done requires way more than math proofs and rote memorization.

“Being an expert in economics would help you understand the world if the world were governed purely by economics. But it’s not. It’s governed by economics, psychology, sociology, biology, physics, politics, physiology, ecology, and on and on.”

In that kind of world, how might we form learners who value and can harness diverse forms of intelligence—including artificial intelligence?

(More scenes from Signals x Richmond below)

***

Thank you for reading this post from Basecamp's blog, Ed:Future. Do you know someone who would find the Ed:Future blog worthwhile reading? Please let them know that they can subscribe here.